In 2026, the most successful tech founders are those who recognize that data - not software - is the real product. With AI rapidly reducing the cost of replicating software features, companies with unique, proprietary data hold the upper hand. Buyers are paying massive premiums - up to 12x revenue multiples - for businesses that have built "data moats" that competitors can't replicate.

Key takeaways:

- AI-native businesses with exclusive data assets are thriving, while traditional SaaS companies face shrinking valuations.

- Proprietary data drives higher customer retention, faster growth, and better pricing power.

- Companies that monetize data through usage-based pricing or standalone data products are seeing stronger margins and faster ARR growth.

The bottom line: If your product gets weaker as AI improves, you're selling a disposable wrapper. If smarter AI makes your data more valuable, you've built a moat - and that's what acquirers want.

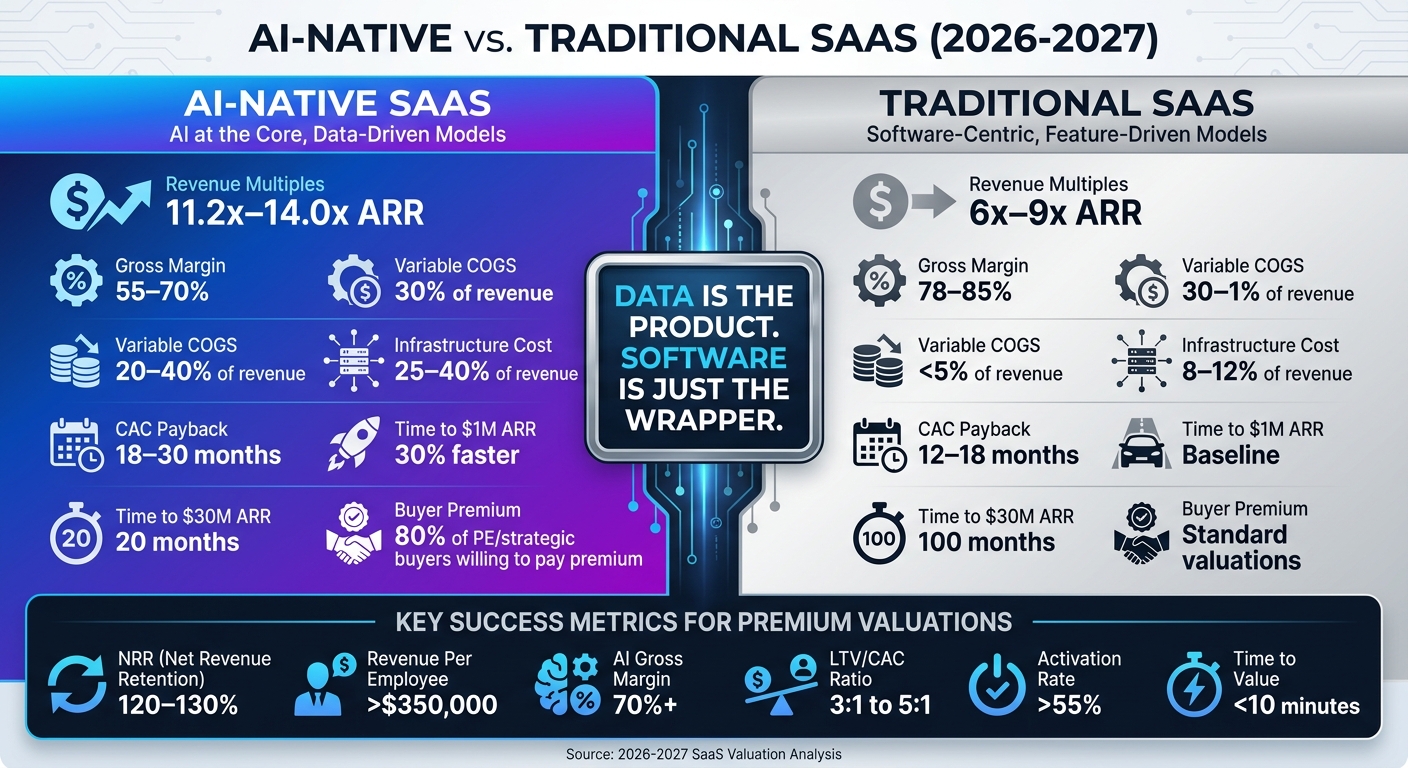

AI-Native vs Traditional SaaS: Valuation Multiples and Key Metrics Comparison 2026-2027

The New Moat That’s Driving Returns in Vertical SaaS

sbb-itb-9cd970b

1. Data-Driven Growth: Building the Foundation for Higher Valuations

When it comes to driving value, data takes center stage - far beyond just the software interface. The divide between AI-native companies and traditional SaaS has grown wider than ever. AI-native products are now trading at revenue multiples of 11.2x–14.0x ARR, compared to the 6x–9x ARR typical for traditional SaaS businesses [6]. This isn't just about advanced technology - it's about how these companies use data not merely to report on growth but to fuel it. It's no surprise that 80% of private equity and strategic buyers are willing to pay a premium for AI-native SaaS [3]. They're essentially investing in businesses that have turned data collection into a growth engine.

Data doesn't just track progress - it drives it. For instance, increasing NRR (Net Revenue Retention) from 95% to 115% can significantly boost valuation multiples [3]. As Khaled Azar from Livmo aptly puts it:

"The multiple moves when AI shows up in your retention and expansion numbers - not when it shows up in your product roadmap slides" [3].

This is why AI-native startups are hitting $1M ARR 30% faster and scaling to $30M ARR in just 20 months instead of 100 [9]. Let’s dive into how data signals are powering better lead qualification and faster onboarding.

1.1. Product-Qualified Leads from Data Signals

Traditional marketing qualified leads (MQLs) often convert at a modest 5–10%, but product-qualified leads (PQLs) - driven by real usage data - achieve conversion rates of 25–30% [11]. Instead of relying on indirect signals like whitepaper downloads, data-driven companies focus on what users actually do within the product.

Take Slack, for example. They found that teams sending 2,000 messages were significantly less likely to churn, and this became their key activation metric. Similarly, Figma identified that designers who created three files in their first week were much more likely to stick around long-term [5]. These insights allow companies to identify serious users versus casual browsers, reinforcing the case for data-driven strategies in achieving premium valuations.

Top-performing companies evaluate PQLs across five key dimensions:

- Engagement depth (how often and broadly the product is used)

- Team expansion (e.g., invites sent)

- Value realization (milestones achieved)

- Upgrade signals (like hitting usage limits)

- Firmographic fit (alignment with the ideal customer profile) [10]

When users hit a "Hot PQL" threshold - such as viewing the pricing page after reaching a usage limit - real-time alerts allow sales teams to act quickly. For instance, personalized outreach might sound like:

"I noticed your team created 15 projects and added 8 members" [10].

This targeted approach can drastically accelerate sales cycles, with PQL-to-closed-won conversion rates reaching 30–50% [10].

1.2. Usage Data for Faster Onboarding

By 2026, user retention will depend on delivering core value within 5–10 minutes. Fail to do so, and users are likely to abandon the product [12]. AI-native products that meet this challenge see 47% higher activation rates and 62% fewer support tickets compared to traditional SaaS offerings [13]. While the average industry activation rate hovers around 33%, the top 10% of SaaS companies achieve rates of 65%+ [11].

Notion provides a great example. By analyzing adoption data, they discovered that their "Table View" feature was used 4–5x more than the "Board View." Acting on this insight, they prioritized database-related features, which significantly boosted retention among power users [5]. This kind of data-driven onboarding removes friction without adding complexity, like extra tutorials. Considering that 80% of features in most software products are rarely or never used [14], the best companies focus on the critical 12% of features that drive 80% of daily usage [14].

The shift to a "strategic freemium" model further highlights this approach. Companies like Slack and Notion now set usage limits within their free tiers. When users hit these caps, it naturally demonstrates the product's value, making upgrade conversations almost effortless [11]. Combined with AI-driven onboarding that adapts to user behavior, this creates a "personal data flywheel." Each interaction improves the product experience, reinforcing its value and helping founders secure higher valuations [7][8].

2. Building Data Moats for Competitive Advantage

The days when coding alone could set you apart are fading fast. With AI tools now capable of generating functional code in minutes, relying solely on software features is no longer enough to ensure a competitive edge [17]. Instead, the real advantage lies in proprietary data - the kind of information that competitors can't scrape, replicate, or synthesize using large language models.

To build a strong data moat, three factors are crucial: the data must be non-synthesizable (AI can't generate it), it must grow in value over time (e.g., through historical depth), and it must play a critical role in how customers derive value [1]. Take Bloomberg Terminal as an example. Its proprietary data isn't just a feature - it shapes how financial professionals make decisions. Switching providers would mean more than just adopting a new tool; it would require rethinking entire processes, making Bloomberg's data an irreplaceable asset.

"The question is no longer just 'what does your software do?' It is 'what does your data know that no one else's data knows?'"

– Joash Boyton, Founder & Managing Director, Acquiry [21]

Epic Systems is another example of a company that has built a formidable moat. By maintaining decades of longitudinal health records for one-third of Americans - including labs, medications, and physician notes - it has created a treasure trove of legally protected data that startups simply can't replicate [1]. Similarly, Tesla's edge in autonomous driving comes from its ability to collect multi-sensor data across more than 2 billion miles of real-world driving. This data captures rare edge cases that competitors can't simulate [15].

"If the underlying model gets 10x better next year, does my product get more valuable or less? Less valuable means the model now does what you were doing. More valuable means a smarter model does more with your data. The first is a wrapper. The second is a moat."

– Lewis Lin [1]

The distinction is clear: products that act as "wrappers" lose value as base AI models improve, while those with moats grow stronger because smarter models can extract even more value from proprietary data. This difference can determine whether a company thrives with premium valuations or struggles with declining relevance.

2.1. Vertical Data Specialization

Focusing on niche markets allows companies to dominate specific verticals with highly specialized datasets. The most valuable data often falls under what industry insiders call "Dark Data" - unstructured, hard-to-access information hidden within organizations that general AI models can't reach [22]. Examples include physician notes with patient context or sensor histories from manufacturing lines.

A case in point is Luminance, a legal AI company. In April 2024, it raised $40 million in a Series B round after its technology helped London's Old Bailey criminal court cut evidence review time by four weeks. Luminance's strength lies in its deep expertise in legal workflows and proprietary datasets, which deliver measurable time savings for its 600 clients across 70 countries [18]. This kind of specialization makes it hard for generic AI tools to compete.

Another great example is OSIsoft (now AVEVA), whose "PI System" stores 20 years of sensor data from industrial production lines like semiconductor fabs. This data tracks temperature, pressure, and maintenance events unique to individual machines, making it invaluable for industrial AI applications. While a general AI model might understand semiconductor manufacturing in theory, it can't replicate the nuanced insights embedded in decades of sensor data from a specific facility [1].

The advantage of vertical specialization goes beyond data itself. Companies in these niches often target labor budgets - representing 13% of U.S. GDP - rather than just IT budgets. This opens up a much larger opportunity. By automating entire workflows instead of just assisting with tasks, these companies can shift from per-seat pricing to charging for outcomes, such as filing taxes or completing audits.

| Data Category | Irreplaceability | Collection Moat | Proprietary Logic | LLM Amplification | Score (out of 5) |

|---|---|---|---|---|---|

| Financial Market Data (Bloomberg) | 5 | 5 | 5 | 5 | 4.83 |

| Retail Scanner / POS Data (NielsenIQ) | 5 | 5 | 5 | 4 | 4.83 |

| Healthcare / Clinical Records (Epic) | 5 | 5 | 4 | 5 | 4.83 |

| Industrial IoT / Historian (AVEVA) | 5 | 5 | 4 | 4 | 4.33 |

| Legal Case Law (Westlaw) | 4 | 5 | 5 | 5 | 4.50 |

Source: Lewis Lin, 2026. Scores are unweighted averages across six criteria. [1]

2.2. Network Effects Through B2B Data Ecosystems

While vertical specialization adds depth, integrating your data into a broader ecosystem amplifies its value. The most powerful data moats don't just collect information - they create ecosystems where every new participant enhances the dataset for everyone else. This "give-to-get" model encourages users to contribute data in exchange for shared insights, creating a collective resource that becomes increasingly hard for competitors to replicate [15].

NielsenIQ exemplifies this approach. Its 40-year dominance in retail scanner data is built on exclusive agreements with tens of thousands of retailers. This dataset has become the backbone for consumer packaged goods managers, informing decisions on pricing, promotions, and category strategies. The more retailers join, the more comprehensive the data becomes, attracting even more brands and deepening the network effect [1].

To strengthen your moat, design systems that capture "data exhaust" - the context and feedback generated as users interact with your platform. For example, when users edit AI outputs or override recommendations, they provide "ground truth" data that improves model accuracy over time. This feedback loop creates a proprietary advantage that competitors can't access [19].

"Your moat isn't the model you use. It's what you capture while using it."

– Nick Talwar, CTO [19]

Stack Overflow illustrates this perfectly. By licensing its 15-year archive of 58 million Q&A pairs to AI companies, it has grown its revenue to approximately $115 million annually [20]. The value of this dataset lies not just in the content but in the community validation - upvotes, expert corrections, and accepted answers - that signals quality and accuracy, making it irreplaceable.

B2B data ecosystems also create "data gravity" - a phenomenon where the platform controlling key workflow data becomes the central hub for an entire ecosystem. When your product becomes the system of record, or the single source of truth, it establishes a structural advantage that's nearly impossible to displace. Companies in this position often command high acquisition premiums because they control the data infrastructure for entire industries.

3. Monetizing Data: Creating New Revenue Streams

Collecting data is only part of the equation; the real challenge lies in turning that data into revenue streams that matter to customers. In today’s world, where data itself is the product, companies are finding new ways to generate income by aligning pricing with customer outcomes and creating standalone data products that bring in recurring revenue.

This shift is already happening. The traditional per-seat licensing model is being replaced by usage-based pricing, where customers pay based on how much they use or achieve. This approach is becoming essential, especially for AI-focused SaaS companies, which face higher costs. For example, GitHub Copilot cost Microsoft an average of $20 per user per month in early 2023 due to expensive compute usage - a clear sign that unlimited pricing models are unsustainable [24][25].

"The right way to price is relative to the value being delivered."

– Jacob Jackson, Co-founder, Supermaven [24]

The companies thriving in 2026 are embracing hybrid monetization models. These combine a base subscription fee for predictable revenue with usage-based tiers that grow as customer consumption increases. In fact, 67% of B2B SaaS companies now use multiple pricing strategies to maximize revenue [27]. This approach not only protects profit margins but also ensures pricing reflects the value customers gain, making data-driven models a cornerstone of higher valuations.

Some companies are taking it a step further by turning their data into standalone products - such as benchmarking reports, market intelligence dashboards, and AI-ready datasets. This strategy transforms data from a secondary byproduct into a direct revenue generator, increasing a company’s value significantly when it’s time to sell.

3.1. Usage-Based Pricing Models

Usage-based pricing (UBP) ties revenue directly to the value customers gain from your platform. Instead of charging a flat fee, you bill based on specific actions - like API calls, data processed, or predictions generated. This model ensures that as customers grow and use more of your platform, your revenue scales alongside them.

For AI-first companies, where inference costs can eat up 20–40% of revenue (compared to less than 5% in traditional SaaS), UBP helps protect margins [26]. It also prevents heavy users from becoming unprofitable while offering lighter users a fairer, lower-cost option.

Replit is a great example of this shift. In 2025, the developer platform adopted usage-based pricing, skyrocketing its revenue from $2 million ARR to $144 million ARR. At the same time, its gross margins improved from single digits - and even negative margins during usage spikes - to a healthier 20–30% range [25]. By linking pricing to consumption, Replit turned a financial challenge into a growth opportunity.

GitHub made a similar move in 2025, moving away from its unlimited Copilot plan. The company introduced a base fee and added a $0.04 charge for every extra request beyond a set limit. This allowed GitHub to offset high variable costs while maintaining a steady revenue base [25]. Hybrid models like this provide stability while capturing additional income as usage grows.

Some companies are even exploring outcome-based pricing, where customers pay for results rather than usage. For example, Intercom’s AI agent, Fin, charges $0.99 per resolved support ticket instead of billing per message or per seat [24]. This approach shifts the focus to measurable achievements, making it easier to demonstrate ROI and secure renewals.

| Metric | Traditional SaaS | AI-First SaaS |

|---|---|---|

| Gross Margin | 78–85% | 55–70% [26] |

| Variable COGS per User | <5% of revenue | 20–40% of revenue [26] |

| Infrastructure % of Revenue | 8–12% | 25–40% [26] |

| CAC Payback Target | 12–18 months | 18–30 months [26] |

To prepare for UBP, start tracking key interactions on your platform now - such as data queries, model inferences, and workflow completions. Even if you’re sticking with subscriptions for now, this data will be invaluable when transitioning to usage-based pricing. It also helps prove your platform’s value during due diligence by showing exactly how customers engage with your product [28].

3.2. AI-Driven Data Products

Beyond pricing tweaks, companies are turning their proprietary data into standalone revenue streams. While usage-based pricing optimizes your core platform’s earnings, data products - like curated datasets, benchmarking reports, or market intelligence tools - open new opportunities for growth. These products are sold separately and cater to specific customer needs.

In 2023, global spending on data and analytics topped $220 billion, yet only 29% of companies described themselves as truly data-driven [30]. This disconnect presents a huge opportunity for businesses to package their data into products that solve real problems - whether helping CFOs benchmark performance or providing labeled datasets for AI training.

Treating data as a product requires attention to detail: high-quality design, rigorous standards, and continuous iteration based on feedback. If your data consistently delivers valuable insights, customers will pay a premium for access.

One way to monetize your platform’s “data exhaust” - logs, click patterns, and user feedback - is by offering Data-as-a-Service (DaaS). These curated datasets can be licensed to partners or developers for AI training [23]. Because this data reflects real-world usage and includes validation signals (like which AI outputs users accept or modify), it’s far more useful than synthetic or scraped alternatives.

"When intelligence costs a fraction of a cent per reasoning step, the API wrapper isn't a business. It's a liability."

– Mansoori Technologies [2]

Another promising strategy is creating aggregated, anonymized industry datasets. If your platform serves a specific sector, you may already have valuable comparative data. Packaging this into benchmarking tools, performance indexes, or trend reports can help customers understand their position relative to competitors. These products often have high profit margins since the cost of serving additional customers is minimal once the infrastructure is in place.

To scale AI-driven data products effectively, focus on three key areas: technical scalability (elastic infrastructure and low-latency APIs), operational scalability (MLOps for smooth deployment and monitoring), and organizational scalability (clear governance and compliance) [29]. This ensures your products can grow without compromising performance or trust.

Lastly, don’t underestimate compliance. With stricter regulations around data privacy and sovereignty, having robust audit trails for data inputs, outputs, and AI actions is crucial [31]. Building these safeguards early not only protects you legally but also becomes a selling point - buyers are willing to pay more for companies that have already solved these challenges.

4. Operationalizing Data for Long-Term Success

Achieving premium valuations means weaving data into every layer of your operations - from product development to compliance. This isn't just about creating dashboards; it's about building systems where data flows seamlessly, informs decisions in real-time, and sets the stage for a smooth, high-value exit.

According to Gartner, 40% of organizations will adopt AI-driven observability practices by 2027 [32]. However, only 42% of customers currently trust businesses to use AI responsibly, making transparent data governance an absolute must [33]. If you're preparing for a sale, your data operations need to be scalable and auditable, with metrics that clearly demonstrate measurable and repeatable value.

This section dives into how real-time analytics, robust governance, and key performance indicators (KPIs) can make data a cornerstone of your long-term success.

4.1. Real-Time Analytics for Product Development

To fully harness data, product teams should integrate continuous monitoring into their development cycles. The best teams in 2026 won't wait for quarterly reviews to assess performance. They handle behavioral signals like infrastructure, using the same principles applied to server uptime: constant monitoring, automated alerts, and immediate action when something goes wrong [32].

For instance, top teams set service-level objectives (SLOs) for key metrics and use alerts to drive fast responses. Imagine a SaaS company setting a threshold: "Time to first value must stay under 8 minutes." If it spikes to 12 minutes, an alert prompts the product manager to investigate - just like an engineer would respond to a server issue [32].

Real-time analytics also enable the "Short Loop" vs. "Long Loop" strategy. The short loop uses live data to inform immediate decisions, like an AI agent adjusting its behavior based on user interactions. The long loop feeds that data back into model training, improving the product over time [16]. This approach ensures you're solving today's problems while building a smarter product for tomorrow.

Establish an on-call system for critical metrics. If a key feature sees a 40% drop in engagement, someone should be notified and ready to act within hours [32]. This level of responsiveness not only prevents small problems from escalating but also signals operational rigor to potential buyers.

Another essential tool is the semantic layer, which standardizes metrics across teams. Platforms like dbt MetricFlow let you define metrics as code, ensuring marketing, product, and finance teams work from the same data [32]. During due diligence, this consistency proves invaluable, showing buyers that your data infrastructure is well-organized, not a chaotic mix of spreadsheets.

4.2. Data Governance for Exit Readiness

As companies shift focus from data monetization to operational readiness, scalable and auditable data systems become critical. Acquirers in 2026–2027 won't just evaluate your revenue; they'll scrutinize your data infrastructure. Messy systems can reduce valuations by millions - or even sink a deal. Buyers want clear lineage, documented taxonomies, and proof that your data is accurate, compliant, and scalable [32][12].

To ensure compliance and accountability, create a centralized, certified data library. Instead of granting every team unrestricted access to raw databases, provide a collection of "certified" tables that are clean, documented, and verified. Assign ownership to each dataset, making someone responsible for its quality and compliance [34]. This federated approach avoids the chaos of inconsistent queries across teams.

Next, apply the Six Dimensions of Data Quality: ensure your data is Accurate, Complete, Consistent, Timely, Valid (follows rules), and Unique (free of duplicates) [34]. Automate quality checks with tools like schema validation and anomaly detection to catch errors before they impact analytics [34].

Compliance is non-negotiable. With stricter AI regulations emerging in 2026, buyers will expect PII masking, role-based access controls, and detailed audit logs for all sensitive data interactions [35][36]. Platforms like Vanta or Drata can automate compliance, maintaining readiness and simplifying due diligence [12].

"Data strategy isn't a technical problem, it's a business strategy problem that happens to involve technology."

– Tom Tunguz [34]

Finally, conduct a 30-day data readiness audit. Can your team calculate ARR, NRR, and churn from a single source in under two hours? If not, your infrastructure needs work [37]. Buyers expect quick access to clean financials and usage metrics - delays or disorganization could cost you the deal.

4.3. Measuring Key Data KPIs

Certain KPIs are key to signaling enterprise value, such as efficiency, retention, and product activation metrics. These aren't vanity metrics - they're the numbers buyers use to model future performance and justify their offers. Strong KPIs demonstrate operational discipline and support premium valuations.

Here’s a breakdown of critical KPIs and their benchmarks for 2026–2027:

| KPI Category | Metric | 2026–2027 Premium Benchmark |

|---|---|---|

| Efficiency | Burn Multiple | < 1.0x (Exceptional) [38] |

| Efficiency | Revenue per Employee | > $350,000 [37] |

| Retention | Net Revenue Retention (NRR) | 120%–130% [37] |

| AI Operations | AI Gross Margin | 70%+ (after inference costs) [38] |

| Growth | LTV/CAC Ratio | 3:1 (Healthy) to 5:1 (Strong) [37][38] |

| Product | Activation Rate | > 55% (Self-serve B2B) [38] |

| Product | Time to Value (TTV) | < 10 Minutes [12] |

Net Revenue Retention (NRR) is often the most critical metric. Leading companies aim for 120–130% NRR, meaning existing customers are expanding their usage faster than others are churning. Anything below 100% suggests weak product-market fit [37][38].

For AI-based companies, AI Gross Margin is another key measure. While traditional SaaS companies enjoy margins of 80–90%, AI-driven software often operates at 50–60% due to higher compute costs. Maintaining a margin above 70% shows you've optimized your infrastructure and aren't overspending on operations [38][24].

Activation Rate reflects how many users reach a meaningful "Aha!" moment in your product. For self-serve B2B SaaS, the target is above 55% [38]. Identify the in-product action most strongly tied to 90-day retention and optimize your onboarding to drive that behavior.

Lastly, track Revenue per Employee. Companies exceeding $350,000 per employee demonstrate efficiency, signaling that automation and AI are being used effectively to scale without inflating costs [37]. Buyers want to see that your business can grow profitably without adding unnecessary headcount.

"In 2026, the winners will not be the loudest or the fastest - they will be the most operationally disciplined."

– Laura Anderson, Startup Pill [12]

Strong revenue alone isn't enough. Companies that combine solid financials with clean, auditable data systems and metrics that prove stickiness and efficiency will stand out. Start tracking these KPIs now to position yourself for success.

5. Conclusion: Positioning for Premium Valuations in 2026–2027

To secure premium valuations in 2026–2027, businesses need to leverage robust data strategies. As previously outlined, proprietary data isn't just a growth driver - it creates a competitive edge. Founders aiming for premium exits focus on data as a core asset, not just product features. They excel in answering the "One Question" Test: "If the underlying model improves 10× next year, does my product become more valuable or less?" If the answer is "less", the product risks being seen as a disposable layer rather than a durable, valuable asset. Successful companies understand that software is simply the delivery tool for proprietary data and specialized workflows [1].

To reposition effectively, follow the 90-Day Pivot Roadmap:

- Days 1–30: Audit your product to identify and phase out features that can be easily replicated.

- Days 31–60: Focus on integrating proprietary data, such as historical insights or exclusive datasets, that generic models cannot duplicate.

- Days 61–90: Evolve your AI capabilities from a simple chat interface into an action-driven tool that can autonomously execute tasks within workflows [4].

This transformation shifts your business from being a System of Record to becoming a System of Action - a feature highly valued by acquirers in 2026 [4].

"The API era and the LLM era are the same movie. The companies that survived the API era owned the graph. The companies that will survive the LLM era own the data."

– Lewis Lin [1]

To build a long-term advantage, focus on creating a defensible data moat. This moat should include data that can't be synthesized, value that grows over time, and logic that becomes integral to your clients' decisions [1]. Embedding feedback loops further strengthens your product's value [2].

Maintain strong unit economics by routing simpler tasks to cost-efficient models like MiMo-V2-Flash, which operates at just 1/35th the cost of Claude Sonnet [2]. Track key performance metrics such as:

- Revenue Per Employee: $350,000 or more

- Net Revenue Retention: 120–130%

- AI Gross Margin: 70% or higher

These metrics demonstrate operational discipline and scalability, helping sustain gross margins above 80% [4]. By 2026, 80% of private equity and strategic buyers are already offering premiums for AI-native SaaS companies, with 87% expecting these premiums to hold or increase through 2027 [3]. Positioning your data as the core product is the key to unlocking premium exit opportunities.

FAQs

What counts as proprietary data that AI can’t copy?

Proprietary data refers to information that AI can't duplicate. This includes data gathered through physical methods, secured via exclusive agreements, or amassed over extended periods - like readings from factory sensors. For such data to stand out, it must remain unavailable to competitors and impossible to recreate. Over time, its value should increase as it becomes embedded in client workflows and decision-making processes. The focus is on maintaining its uniqueness and the long-term benefits it provides.

How can I build a data moat without compromising user privacy?

To create a strong data advantage while respecting user privacy, aim to gather exclusive data that's difficult for competitors to duplicate. This could come from long-term collection efforts or forming unique partnerships. Protect this data using privacy-focused tools like self-hosted solutions or edge computing environments. Additionally, set up clear agreements outlining who owns the data and how it can be used. By focusing on privacy-first strategies, you can build trust, stay compliant, and maintain an edge in the market.

When should I switch from per-seat pricing to usage-based pricing?

As we move into 2026, it might be worth considering a shift to usage-based or outcome-based pricing models, especially as AI becomes more accessible and workloads grow increasingly dynamic. Traditional per-seat pricing could lose relevance, particularly since AI often replaces multiple human roles and manages fluctuating workloads. By adopting a more adaptable pricing approach, you can better align your model with the actual value delivered to customers.

Related Blog Posts

- Is Your SaaS an AI “Wrapper”? Why Most Thin AI Layers Will Collapse (And How to Build a Defensible Data Moat).

- 8. $4.9 Trillion in M&A in 2025. A Record. 25% of Every Deal Over $5 Billion Had an AI Theme. This Is Not a Cycle. This Is a Structural Shift in Who Controls Valuable Assets. CNBC

- 16. The Era of "Growth at All Costs" Is Over. The Era of "Whoever Controls the Data Controls the Exit Multiple" Has Begun. Most Founders Are Still Playing the Old Game.

- 17. Investors Aren't Looking for Workflow Stickiness Anymore. They're Asking: "If an AI Agent Does This Work, Who Needs Your Software?" If You Don't Have an Answer, Your Valuation Already Does. TechCrunch