Data is no longer just a byproduct of operations - it’s a core business asset. Companies that treat data as a product, with clear ownership and high-quality standards, see faster analytics, lower costs, and stronger AI performance. Yet, many businesses struggle with siloed, unreliable, or poorly managed data, limiting its potential.

Here’s what you need to know:

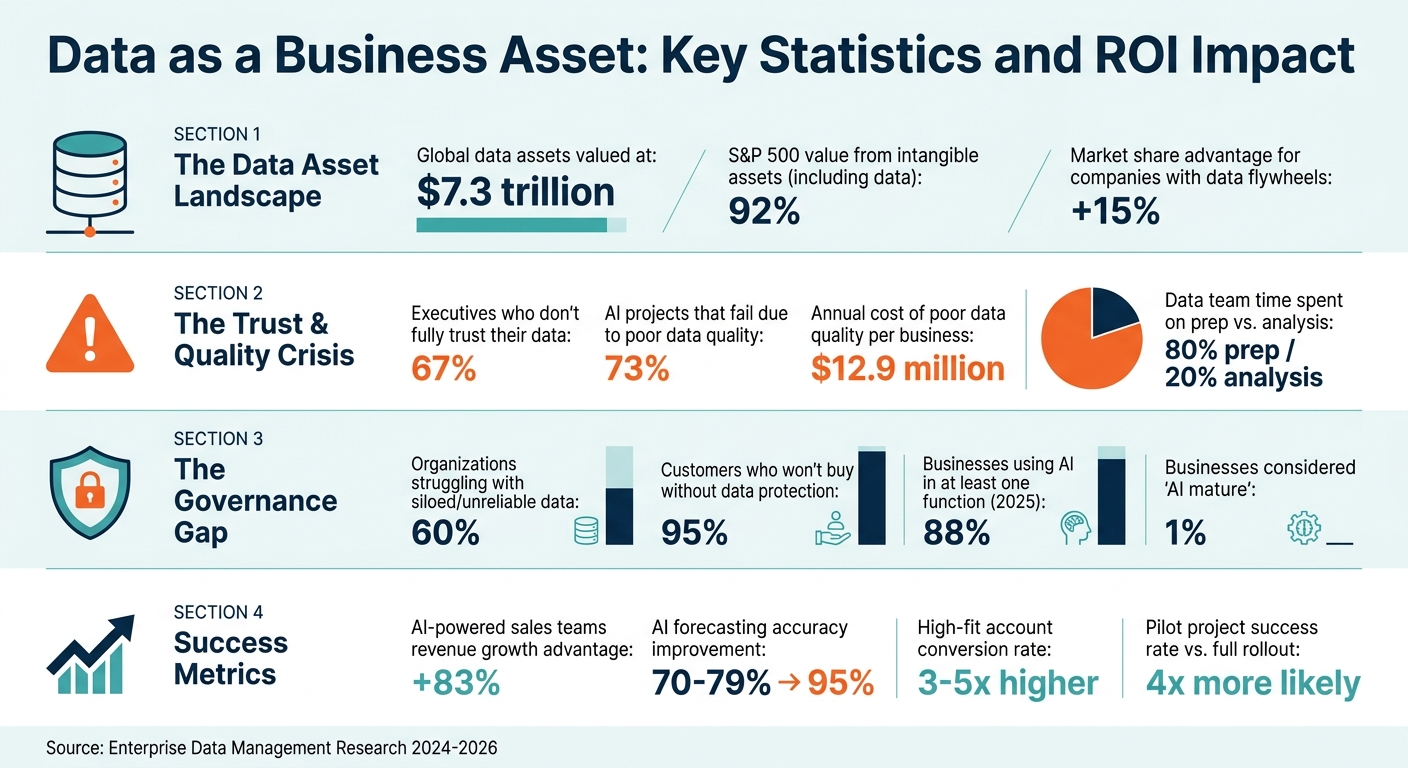

- Data as an Asset: With global data assets valued at $7.3 trillion, proprietary data gives businesses a competitive edge, enabling better decision-making and new revenue streams.

- Key Challenges: 67% of executives don’t fully trust their data, and 73% of AI projects fail due to poor data quality and governance.

- Solutions: Treat data like a product by assigning ownership, enforcing quality checks, and integrating it into workflows. Build scalable infrastructure and adopt AI tools for actionable insights.

- Governance Matters: Strong frameworks and tools like Unity Catalog or Acceldata ensure compliance, protect data, and reduce errors.

If you’re not leveraging data effectively, you’re leaving growth opportunities on the table. Proper management transforms raw data into a strategic tool for growth, better decisions, and reliable AI systems.

Data as a Business Asset: Key Statistics and ROI Impact

Treating Data as a Product: The Analytics Framework That Actually Works

sbb-itb-9cd970b

Why Data Should Be Treated as a Business Asset

Viewing data as a business asset lays the groundwork for strategies that can drive growth, improve decision-making, and increase customer value. For many leading SaaS and AI companies, data serves as a cornerstone for growth, better decisions, and greater customer satisfaction. This perspective has tangible financial weight: globally, data assets are valued at $7.3 trillion, and 92% of the value of S&P 500 companies now stems from intangible assets like data [12].

Proprietary data gives companies a competitive edge by creating a protective barrier through unique datasets - ranging from niche insights to real-world usage patterns. These datasets open up new revenue opportunities. For example, Stripe’s recovery tools helped recover over $6.5 billion in 2024. This asset-focused mindset also enables offerings like AI-powered premium tiers, usage-based pricing models (e.g., per inference or token), and Data-as-a-Service (DaaS) [7][9].

Using Data to Drive Growth

Every user interaction generates valuable data, which fuels growth. Spotify has spent 15 years building a competitive edge through a data flywheel: every track a user listens to fine-tunes its recommendation algorithms, leading to more relevant playlists. This cycle keeps improving, creating a system that’s tough for competitors to imitate [12]. AI-powered tools amplify this process, with better models driving higher engagement and improved product performance. Companies leveraging this feedback loop enjoy a 15% market share advantage over their competitors [8].

Data products like calculators or benchmarking tools also play a role in customer acquisition. Spotify’s refined recommendations and Zillow’s property valuation tools both enhance user engagement and drive organic growth [5]. On top of that, predictive analytics can help companies identify users at risk of churning by monitoring feature usage. These insights allow for proactive retention campaigns, further fueling growth [11]. This approach not only strengthens customer relationships but also supports smarter decision-making, as explored in the next section.

How Data Improves Decision-Making

High-quality data and robust governance frameworks enable faster, more informed decisions. However, trust remains a hurdle - 60% of organizations report challenges with siloed or unreliable data [8]. Companies that prioritize strong governance and continuous data quality checks gain a clear competitive advantage.

"The real challenge isn't scaling AI. It's trusting the data it runs on."

– Mike McQuaid, Chief Revenue Officer, Acceldata [8]

Efficiency improves dramatically when data from various sources - like CRM systems, billing platforms, and product analytics - comes together under a unified infrastructure. This integration provides a comprehensive view of the customer, eliminating fragmented decision-making. Real-time data further enhances operational agility. For instance, in January 2026, OpenAI introduced an AI-powered data agent using GPT-5.2 to help over 3,500 internal users navigate 600 petabytes of data. Tools like Codex enrichment and "Memory" integration allowed teams to answer complex queries using natural language [10].

Increasing Customer Value Through Data

Personalization is no longer a bonus; it’s an expectation. Data insights help companies track user behavior, predict needs, and deliver tailored experiences that boost satisfaction and loyalty [11]. A great example is TikTok Studio, which provides creators with detailed metrics and AI-driven content suggestions [5].

The combination of AI and data creates a powerful cycle of value. AI uncovers patterns and makes predictions, while high-quality data enhances the accuracy and reach of those models [12].

"Data without AI is a warehouse. AI without data is an empty engine. Together, they create a compounding value cycle."

– David Stroll, Co-Founder and Chief Scientist, Opagio [12]

Embedded analytics, where insights are seamlessly integrated into workflows rather than accessed through separate dashboards, further enhances customer value. By reducing friction and enabling immediate action, these tools make products indispensable.

How to Optimize Data Utilization

Bridging the gap between gathering data and extracting its value depends on having the right infrastructure, analytics, and tools in place. Companies that close this gap often enjoy tangible benefits - higher revenue, improved customer retention, and faster decision-making.

Using Machine Learning and Predictive Analytics

Machine learning transforms historical data into actionable insights, while predictive analytics can anticipate revenue trends, highlight churn risks, and optimize how products are used by uncovering subtle patterns. However, before deploying these models, companies need to ensure their infrastructure is ready for AI. This includes reconstructing user journeys from raw events, replaying metrics consistently, aligning feature definitions between training and production, and implementing strict access controls for sensitive data [13].

A feature store plays a key role here, ensuring that the same feature definitions are used during both training and production. This prevents "training-serving skew", a common issue where models perform well in testing but fail in real-world use [13][15]. By addressing this, businesses can avoid costly errors and build trust in their AI systems.

The results can be striking. Sales teams leveraging AI-powered revenue tools report 83% higher revenue growth compared to their peers [17]. Traditional revenue forecasts typically achieve an accuracy of 70% to 79%, but AI-driven forecasting can push this figure up to 95% [20]. Additionally, accounts scored as high-fit through AI are three to five times more likely to convert than low-fit accounts [20].

"The fastest way to kill an AI roadmap is to ship a model that can't be reproduced and can't be explained."

– Apptension [13]

To support these advanced analytics, a scalable and reliable data infrastructure is essential.

Building Scalable Data Infrastructure

As data volumes increase, infrastructure must grow without becoming overwhelmed. A modular design is often the best approach, structured into five layers: instrumentation (data sources), ingestion (ETL/ELT), storage (data warehouse or lakehouse), transformation (data modeling), and activation (reverse ETL) [16][13]. This setup minimizes bottlenecks and allows each layer to evolve independently.

The data warehouse should act as the single source of truth, consolidating fragmented data from tools like CRM systems, marketing platforms, and product analytics [16][17]. For instance, in 2024, Collibra used a unified data platform to centralize go-to-market data, cutting territory planning time by 30% [17]. Similarly, between 2024 and 2025, HubX implemented automated churn management with Paddle's Retain tool, saving $106,000 in potential lost revenue within just three months and retaining 63% of customers who intended to cancel [18].

Data contracts enforce strict schemas for events, ensuring stable names, versioning, and typed payloads to avoid downstream issues when products evolve [13]. Validating data at the ingestion layer using schema checks and quarantining malformed data before it reaches the warehouse is equally important. Defining a set of 10–20 "golden events" tied to core business KPIs helps maintain high-quality, reliable data [13].

"Data strategy isn't a technical problem, it's a business strategy problem that happens to involve technology."

– Tom Tunguz, GP, Theory Ventures [14]

Once the infrastructure is solid, AI tools can turn raw data into actionable insights that drive growth.

Using AI Tools for Data Insights

AI tools illustrate how effective data management can yield actionable insights and improve competitive positioning. The edge no longer lies in the AI models themselves - tools like GPT-4, Claude, and Gemini are becoming more common. Instead, the real advantage comes from the context layer: unified data, resolved identities, and interconnected signals [19][7]. For instance, a single call recording can hold 10–20 times more context (12,000–16,000 tokens) than an average CRM record (500–1,200 tokens) [19].

Tools like Codex enrichment allow teams to answer complex questions using natural language [10]. This capability shows how AI can extract structured insights from unstructured sources like call transcripts, meeting notes, and emails - surfacing details like budget signals or tech stack information that might otherwise go unnoticed [21].

Integration methods vary. The Model Context Protocol (MCP) connects data sources like ZoomInfo or internal databases to AI tools such as Claude, ChatGPT, or custom agents [19][10]. Conversational interfaces embedded in platforms like Slack or web tools enable natural language querying [10]. Additionally, automated tools can analyze call transcripts and emails to populate CRM fields with structured data - capturing details like budgets, tech stacks, and key stakeholders without manual input [21].

"AI strategy is data strategy. The variable that matters is what your AI knows about your market, your accounts, and your motion."

– GTM AI Framework [19]

To maximize the impact of AI, focus on activation - ensuring that users directly experience the value it delivers. While 88% of businesses used AI in at least one function by 2025, only 1% considered themselves "AI mature", meaning AI was fully integrated into their workflows and actively measured against outcomes [9]. This gap between adoption and maturity highlights a major opportunity for optimization.

Setting Up Data Governance

Without proper governance, data can quickly turn into a liability. Poor data quality costs businesses around $12.9 million annually [24][26]. Even more concerning, 73% of AI projects fail not because of weak algorithms but due to issues with data quality and governance [24]. Add to that the fact that 95% of customers won’t buy from a company if their data isn’t adequately protected [27], and it’s clear: governance isn’t just a bureaucratic task - it’s the backbone of using data effectively.

Striking the right balance between accessibility and control is key. Data teams spend about 80% of their time on tasks like discovery, preparation, and protection, leaving little room for actual analysis [26]. Good governance minimizes this workload by setting up clear policies, roles, and processes. This ensures data is secure, reliable, and compliant without creating unnecessary slowdowns.

Creating a Governance Framework

A solid governance framework rests on four main pillars: data quality management, stewardship, data protection and compliance, and lifecycle management [22][23][26]. The best approach depends on your organization’s size and needs:

- Small organizations (<1,000 employees) or those in highly regulated industries often benefit from centralized governance for consistency, though it can create bottlenecks.

- Large enterprises (>5,000 employees) typically thrive with federated models that allow for scalability.

- Mid-sized firms (1,000–5,000 employees) often find a hybrid approach works best [22][24][25].

Clearly defining roles is crucial. An Executive Sponsor sets the vision and secures funding. Data Owners (usually senior leaders) ensure data quality within their domains, while Data Stewards handle daily policy enforcement and manage metadata [22][27]. A Data Governance Council, made up of stakeholders from IT, legal, and business units, helps resolve disputes and updates policies as regulations evolve [23][27].

"Governance is 80% people/culture, 20% technology."

– Sagar Rabadiya, Co-Founder, SR Analytics [24]

Starting small often leads to better outcomes. Organizations that begin with a pilot project are four times more likely to succeed compared to those attempting a full-scale rollout immediately [24]. For example, focus on a high-value area like customer PII or revenue data to show measurable results, such as ROI, within 90 days. A regional bank demonstrated this in 2024 by introducing a 15-minute catalog training for data access requests, which boosted compliance from 34% to 91% in just one quarter [24].

Another key step is establishing a data classification system early on. Define three to five levels - like Public, Internal, Confidential, and Restricted - to automatically apply security measures and retention policies based on sensitivity [25][26]. Adding a semantic layer can also help standardize metrics across analytics and AI tools [22].

Governance Platform Options

The right platform can make governance actionable. For instance:

- Anjana Data Platform ensures auditability and control in complex environments [26].

- Unity Catalog consolidates governance for all data and AI assets in a single layer [26].

- Acceldata uses AI to monitor data systems 24/7, identifying and fixing quality issues without constant human oversight [27].

These platforms often automate essential tasks, such as column-level lineage, which tracks data from its origin to its final use - critical for compliance with regulations like GDPR, CCPA, and HIPAA [26][22]. They also enforce role-based access control, ensuring users only access the data they truly need [26][28].

Real-world examples show how effective governance can transform organizations. By October 2025, Doctolib reframed its governance program as an enablement tool rather than a compliance requirement, achieving a 6x increase in adoption. Over 900 employees across product, engineering, and security now contribute to a "living catalog", which has reduced ad-hoc data discovery and created a lasting knowledge base [29]. Similarly, RSG Group, the parent company of Gold’s Gym, standardized definitions for key metrics like "active member" and "revenue" across 900+ locations in 30 countries. This eliminated duplicate SQL and cut down data preparation time significantly [29].

"You can't trust your AI answers if you don't trust your data. Governance isn't optional anymore - it's the cost of accurate AI."

– Armon Petrossian, CEO & Co-founder, Coalesce [29]

Practical Steps to Maximize Data Value

Once you’ve established strong data governance, the next step is turning that data into actionable business outcomes. This shift is crucial, as a staggering 75% of CIOs have reported struggling to extract meaningful insights from their existing data [32]. Simply storing data isn’t enough - it needs to be effectively applied across analytics, migrations, and automation to push growth and mitigate risks. Let’s dive into how SaaS analytics tools, AI-driven migrations, and agentic AI can transform data into a competitive edge.

Using SaaS Analytics for Business Insights

Traditional dashboards often focus on past events, but modern SaaS analytics tools go a step further by identifying problems before they escalate. Take the example of TalentFlow in April 2026. Despite six dashboards showing all metrics as "green", the company was losing revenue. Parse, a SaaS analytics tool, discovered a bug in GitHub commits that caused a 34% EU payment failure and stalled $2.3 million in enterprise deals [31].

"Parse finds the problems you don't know exist before they become board-level crises. Our data team needs two weeks. Parse needs four minutes." – Priya, CEO [31]

Other tools like Omni Analytics, Statwide, and QuestionPro make it easier for teams to unify key metrics like ARR (Annual Recurring Revenue), NRR (Net Revenue Retention), churn, and CAC (Customer Acquisition Cost). These tools empower teams across product, go-to-market, and finance functions to quickly identify revenue opportunities and flag accounts at risk [30][32][33]. For instance:

- Photoroom: With a small data team of three, Photoroom used Omni Analytics to enable over 100 employees to access insights quickly, significantly reducing their backlog of ad-hoc data requests [33].

- QuestionPro: Founder Vivek Bhaskaran implemented Statwide to give the customer success team a unified view of every account, allowing them to address issues before they impacted customer retention [32].

For RevOps teams, tools like Discern AI monitor pipeline health, inspect deals, and ensure data quality, transforming raw CRM data into what some describe as "Investor-Grade Truth" [34]. Meanwhile, Kaelio offers a "context layer" built from a company’s data stack (e.g., Snowflake, dbt), which grounds AI agents in verified business definitions [35]. The trend is clear: businesses are moving away from static dashboards toward autonomous systems that monitor data and suggest actionable steps.

Migrating Data with AI to Reduce Vendor Lock-In

Vendor lock-in happens when data is confined to proprietary systems, making transitions costly and complex. AI-driven migration tools simplify this process by automating ETL (Extract, Transform, Load) workflows and ensuring data remains consistent across platforms. Implementing data contracts and schema registries can prevent "semantic drift" - a common issue during migrations [13]. For example, defining contracts for events (with stable names, versions, actor IDs, and typed payloads) at the ingestion layer ensures data portability [13].

Codex-driven enrichment takes this a step further. By analyzing pipeline logic (e.g., Spark or Python), AI can derive code-level definitions of data tables, capturing business intent that traditional SQL metadata might overlook [10]. In January 2026, OpenAI’s engineering team deployed an in-house AI data agent powered by GPT-5.2. This agent processes over 600 petabytes of data across 70,000 datasets, using Codex-derived enrichment to differentiate between similar tables. This allowed employees to move from questions to insights in minutes instead of days [10].

Packaging data, semantics, and governance as logical data products creates portable, self-describing resources that AI systems can use regardless of the underlying storage system [4]. For example, in 2025, enterprise software firm Collibra implemented a unified data platform, cutting its territory planning time by 30% [17].

"Organizations deploying sovereign enterprise AI agents are building proprietary competitive advantages that generic software cannot replicate - while those clinging to beta SaaS face an existential threat." – Eugene Vyborov, CEO, Ability.ai [36]

With portable and unified data, companies are now positioned to leverage AI agents for automated decision-making.

Adding Agentic AI to Workflows

Agentic AI systems are designed to make decisions, execute multi-step tasks, and improve through self-learning - all without constant human intervention. Unlike traditional automation, which relies on rigid rules, agentic AI can adapt to changing conditions and handle complex scenarios [37].

To ensure reliability, these systems require a multi-layered context. This includes metadata on table usage, human annotations, code-level definitions (often derived via Codex), insights from tools like Slack or Notion, and persistent memory [10]. For example, OpenAI’s internal data agent uses a memory layer to store corrections, such as specific string-matching filters for analytics experiments. This prevents the agent from repeating past mistakes [10]. When intermediate results (e.g., a SQL join) return zero rows, the agent investigates, adjusts its approach, and retries autonomously [10].

"Data products have become the foundation for AI agents... Agents cannot reason over chaos." – Suda Srinivasan, Group Outbound Product Manager, Google Cloud [4]

When implementing agentic AI, start small. Focus on 10–20 "golden events" that drive key business metrics and write strict, versioned specifications for them before scaling [13][40]. If historical queries are insufficient, use synthetic data to bootstrap evaluation, leveraging language models to generate paraphrases, boundary conditions, and adversarial cases [37]. Additionally, incorporating "human-in-the-loop" checkpoints ensures accountability for high-impact tasks or complex scenarios [39].

For instance, a major retailer deployed an agentic AI system in March 2026 to optimize seasonal campaigns. By incorporating strategic human checkpoints, they improved attach rates and reduced service friction in just a few days [38]. Agentic AI can cut manual configurations by up to 80% and resolve issues ten times faster than traditional workflows. Its success is measured by whether it triggers correct downstream actions or retrieves the right IDs [37].

Comparison Table: Data Optimization Tools

Choosing the right data optimization tool can make a big difference in how effectively your business leverages data. Your decision should align with your infrastructure, team capabilities, and overall goals. For context, over 60% of Fortune 500 companies rely on Databricks for their data and AI needs [41], and by 2026, 88% of organizations had adopted AI analytics tools [44].

Tool Comparison

Here’s a breakdown of some top data optimization platforms, comparing their strengths in integration, scalability, pricing, and governance. Each tool offers unique features catering to specific needs:

- Databricks stands out with its unified lakehouse architecture, combining analytics and AI seamlessly [45].

- Snowflake is celebrated for its SQL-first approach, elastic scalability, and zero-copy integration [45].

- Tableau Next and Looker excel in visualization and governance. Looker’s LookML, for instance, reduces AI query errors by 66% [45].

- Alation and GoodData focus on AI-driven analytics and embedded SaaS, with Alation trusted by 40% of the Fortune 100 [42].

| Tool | Best For | Ease of Integration | Scalability | Flexible Pricing | Governance Features |

|---|---|---|---|---|---|

| Databricks | ML/AI & Engineering | High (Unity Catalog) | High (Serverless/Photon) | Consumption-based (DBU) | Strong (Unity Catalog for data, models, AI agents) [41] |

| Snowflake | SQL-first Analytics | High (Zero-copy) | High (Elastic) | Credit-based | Strong (Cortex/Horizon) [45] |

| Tableau Next | Visualization & Analytics | High (API-first) | High | Per-user ($75–$115/mo) | Moderate (Tableau Semantics) [43][45] |

| Looker | Google Cloud/Governance | High (LookML) | High | Custom ($150–$200/user/mo) | Strong (Centralized semantic layer) [45] |

| Alation | AI-driven Data Intelligence | High (Slack, Teams, Databricks) | High | Custom enterprise | Advanced (Automated documentation, quality monitoring) [42] |

| GoodData | Embedded SaaS/AI | High (Cloud-native) | High | Predictable/Usage-based | Strong (Intelligence Layer) [44][45] |

| Power BI | Microsoft Ecosystem | Moderate | Moderate/High | Per-user ($14–$24/mo) + Capacity | Moderate (Fabric) [45] |

Cost Considerations

The cost of these tools varies widely depending on the size and stage of your company. For example:

- Early-stage companies (under $1 million ARR) often spend between $0–$50,000 annually on SaaS analytics tools.

- Growth-stage companies ($1–$10 million ARR) typically invest $50,000–$200,000 per year on a modern data stack.

- Larger organizations (over $10 million ARR) can spend $500,000–$2 million or more annually on full-scale data solutions [14].

For Databricks specifically, mid-sized teams usually spend $3,000–$8,000 per month on its consumption-based DBU pricing model [45].

"Data strategy isn't a technical problem, it's a business strategy problem that happens to involve technology." – Tomasz Tunguz, Founder [14]

Key Features to Look For

When evaluating tools, focus on those that serve as a System of Record, where your "ground truth" data resides. These platforms are more resilient to AI disruptions and help improve customer retention [46]. Additionally, prioritize features like automated lineage, PII classification, and audit trails to meet regulatory requirements and support autonomous AI agents [4].

Conclusion

Think of data not as a side effect of operations but as a core business asset. As AI models become more standardized, your proprietary data can set you apart. Companies with high-quality, well-organized data gain an edge that competitors with shorter retention cycles simply can't match [3].

"In 2025, the companies that win will be the ones who treat their B2B company and contact data as a core product - not a 'nice-to-have' or an afterthought."

- Mark Feldman, Founder & CEO, RevenueBase [1]

Research shows that treating data as a product speeds up deployment and reduces ownership costs [2]. Yet, trust in data remains a challenge for many organizations. This highlights the need for a shift in strategy: assign dedicated ownership, establish clear SLAs, and integrate workflows to turn operational data into a strategic tool that fuels growth, sharpens decision-making, and powers reliable AI systems [4].

Start with a clear roadmap and work toward incremental improvements. Focus on quality over quantity - 10,000 clean, accurate records will always outperform 100,000 messy ones [1]. Build a layered data infrastructure (Bronze, Silver, Gold) to streamline validation and ensure faster access to insights [2]. And remember: data only becomes valuable when used effectively [6]. Companies that preserve their operational history and treat it as a strategic resource will gain a lasting advantage over their competitors [3].

FAQs

Where should I start if my company doesn’t trust its data yet?

Start by treating data as a strategic asset that drives decisions. Create a clear plan aimed at improving its quality, accuracy, and timeliness. This means prioritizing investments in areas like data governance, proper documentation, and reliable measurement systems. These efforts build trust in your data and show its value to your organization. By setting clear goals for accuracy and completeness, you can help shape a culture that trusts data and uses it to make smarter decisions.

What does it mean to treat data like a product in practice?

Treating data like a product means approaching it with the same level of care and strategy you'd apply to a consumer product. This involves establishing clear ownership, maintaining high-quality standards, and ensuring it's user-friendly. Key actions include assigning responsibilities, creating service level agreements (SLAs), and enhancing how easily the data can be found and used. When organizations align their data strategies with business objectives, they make data accessible, dependable, and actionable - turning it into a powerful business asset.

How do I set up data governance without slowing teams down?

To bring data governance into play without bogging down your teams, make it part of their everyday workflows as a tool for empowerment. Start by establishing clear ownership, setting consistent standards, and leveraging automation to cut down on tedious manual tasks. A scalable data operating model that fits seamlessly into your workflows can ensure compliance while keeping things efficient. This method not only simplifies decision-making and upholds data quality but also turns governance into a strength that drives innovation forward.

Related Blog Posts

- Agentic AI Explained The Future of Fully Autonomous SaaS Growth

- 2. Every Piece of Data Your Company Collects Isn't Just Information. It's Influence. And If You're Not Intentional About How It's Used, You're Already Giving Your Power Away. Paul Garny

- 16. The Era of "Growth at All Costs" Is Over. The Era of "Whoever Controls the Data Controls the Exit Multiple" Has Begun. Most Founders Are Still Playing the Old Game.

- 20. The Founders Who Sell in 2026–2027 at a Premium Will Have One Thing in Common: They Understood That Data Is the Product. Their Software Was Just the Wrapper.