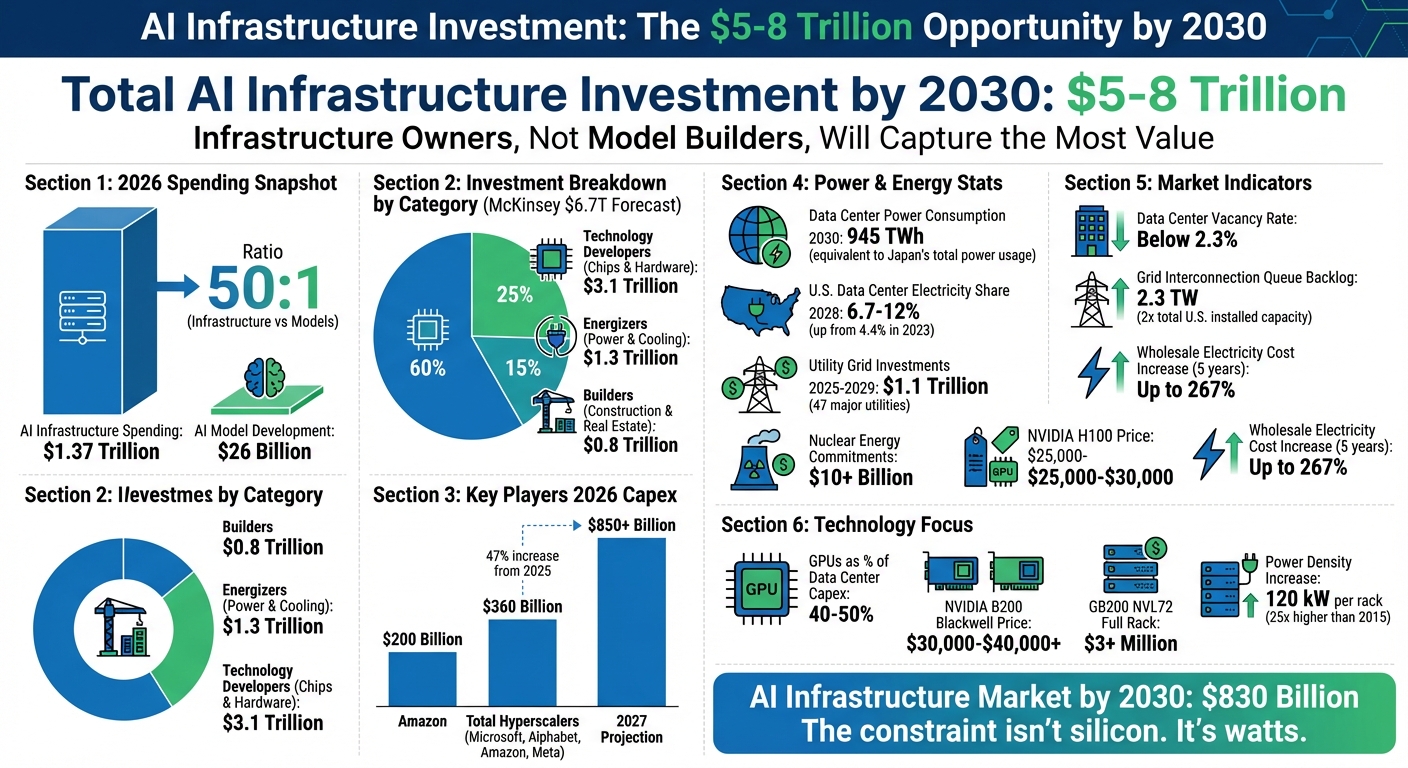

By 2030, AI infrastructure spending will hit between $5 trillion and $8 trillion, reshaping the digital economy. The big winners? Not the companies creating AI models, but those controlling the underlying infrastructure - data centers, power systems, and cooling technologies. Here's what you need to know:

- 2026 Spending Trends: AI infrastructure spending is projected to reach $1.37 trillion, dwarfing the $26 billion allocated to AI model development.

- Big Players: Amazon, Google, Meta, and Microsoft are leading investments, with Amazon alone allocating $200 billion in 2026.

- Power and Cooling: Energy demand for AI is skyrocketing, with data centers consuming 945 TWh by 2030 - the same as Japan's total power usage today.

- Key Technologies: GPUs, liquid cooling, and advanced networking dominate spending, with NVIDIA's chips taking center stage.

Infrastructure owners - those who provide the "pipes" AI runs on - are positioned to profit the most. With data center vacancy rates below 2.3% and grid capacity in high demand, these operators are securing premium pricing and long-term contracts. If you're betting on AI's future, focus on the companies building the backbone, not just the algorithms.

AI Infrastructure Investment Breakdown: $5-8 Trillion by 2030

InfraAI'26: The Global Backdrop: Geopolitics, Macro-Economics and AI Infrastructure pre-2030

sbb-itb-9cd970b

Breaking Down the PwC Forecast and Spending Trends

PwC estimates that $5 trillion to $8 trillion will be invested in AI infrastructure by 2030, marking one of the largest capital investment cycles in recent history. This isn't just speculative spending - it's largely driven by hardware constraints. As of 2026, the shift from training AI models to serving them (inference) is expected to account for 60% to 70% of total AI compute demand among hyperscalers, fundamentally reshaping how capital is allocated [2]. Let’s take a closer look at how this massive projection is distributed across key infrastructure categories.

Spending is not evenly distributed. According to McKinsey, the $6.7 trillion forecast breaks down into three main categories: Technology Developers (chipmakers and hardware manufacturers) will secure 60% of the investment, totaling $3.1 trillion; Energizers (power and cooling systems) will receive 25%, or $1.3 trillion; and Builders (construction and real estate) will account for 15%, or $0.8 trillion [1]. AI chips, which operate at 10x to 15x the power density of traditional CPUs, are driving the increased importance of energy infrastructure.

Where the Money Is Going: Core Infrastructure Categories

The largest share of investments will go toward what McKinsey refers to as "short-lived assets" - GPUs and custom silicon, which make up approximately 67% of total spending [2]. These accelerators, with a 2- to 3-year refresh cycle, require constant reinvestment [8]. Hyperscalers like Amazon, Google, and Microsoft are developing in-house silicon (e.g., Amazon's Trainium, Google's TPUs, and Microsoft's Maia chips) to reduce dependency on third-party providers and better optimize inference workloads.

Power infrastructure is the second-largest spending category. Across the U.S., utilities from 47 major companies are projecting $1.1 trillion in capital investments through 2029 to upgrade electrical grids for AI demand [3]. This highlights a strategic shift as energy providers position themselves as essential partners in AI's growth, locking in long-term contracts with hyperscalers and data center operators. Baron Fung, Senior Research Director at Dell’Oro Group, observes that "servers optimized for AI training and specialized workloads could account for approximately two-thirds of total data center infrastructure spending by 2030" [7].

These categories emphasize the wide-ranging components supporting the broader market forecasts.

Comparing Forecasts: PwC, McKinsey, and Dell’Oro

Different analysts approach their forecasts with varying scopes, leading to significant differences in projections. PwC includes the entire infrastructure ecosystem - power, cooling, and grid upgrades - while Dell’Oro focuses exclusively on IT equipment. The table below highlights these differences:

| Analyst | 2030 Projection | Scope & Methodology |

|---|---|---|

| PwC | $5T – $8T | Total AI infrastructure, including power generation, cooling, and grid upgrades |

| McKinsey | $6.7T | Global data center capex using a gigawatt capacity model; 70% AI-driven [1] |

| Dell’Oro Group | $1.7T | IT equipment only (servers, storage, networking); excludes power and construction [7] |

| NVIDIA CEO | $3T – $4T | Industry-wide AI infrastructure spend [2] |

Dell’Oro’s $1.7 trillion estimate is much lower because it focuses solely on IT equipment, excluding broader infrastructure like power generation, land acquisition, and construction, which PwC and McKinsey incorporate [7]. McKinsey’s capacity-driven model predicts global data center capacity will nearly triple, growing from 82 GW in 2025 to 207 GW by 2030, with AI workloads driving 70% of this demand [1]. Unlike the fiber overbuild of the 1990s, today’s infrastructure expansion is backed by firm contracts.

This breakdown underscores that the companies managing the foundational infrastructure will ultimately reap the most rewards from AI’s rapid growth.

Why Infrastructure Owners Will Capture the Most Value

AI model developers face intense competition and steep R&D risks, but infrastructure owners are in a much more stable position. They benefit from the AI boom without being directly tied to the success of any single AI application [3]. Physical infrastructure like power, land, and specialized labor is in limited supply, which naturally prevents overbuilding and supports steady pricing [9]. This creates a reliable setup for generating consistent revenue.

Today's infrastructure expansion is "contracts-first," meaning facilities are often leased out before they're even built [1]. In fact, data center vacancy rates in major metro areas have dropped to historic lows - below 2%. This scarcity has shifted the market dynamics, allowing operators to charge premium prices for large power blocks instead of offering volume discounts [9].

Infrastructure providers also see revenue growth as leases reset to reflect current market rates. Many leases expiring by 2029 are priced 15% to 25% below today's rates [9]. On top of that, grid interconnection queues have grown to six or more years, with a backlog of 2.3 TW - double the total installed generation capacity in the U.S. [9]. These bottlenecks make it nearly impossible to replicate or bypass the infrastructure, solidifying the role of infrastructure operators at the core of the AI ecosystem [5].

Hyperscalers and Neocloud Providers Driving Demand

Big names like Microsoft, Alphabet, Amazon, and Meta are fueling the demand for infrastructure. Their combined capital expenditures are expected to hit $360 billion in 2025, a 47% increase from the previous year [3]. By 2026, this figure could surpass $700 billion, and projections for 2027 suggest it may climb to over $850 billion [9].

Microsoft has already committed $3.3 billion for a data center campus in Wisconsin as part of its $80 billion fiscal year 2025 capex plan [3]. Meta is working on a massive 2,250-acre site in Louisiana (Hyperion), costing $10 billion and powered by 5 GW from a nearby nuclear plant. Another site, Prometheus, is slated for Ohio in 2026 [4]. Oracle, meanwhile, has signed a five-year deal worth $300 billion with OpenAI, starting in 2027, following an earlier $30 billion cloud services agreement [4].

"The limiting factor in AI infrastructure deployment is no longer GPU availability, it's lack of data centers." - Wes Cummins, CEO, Applied Digital [3]

Specialized providers like CoreWeave and Lambda, often called "neoclouds", are also stepping up to meet the demand. However, these companies come with different credit risks compared to traditional hyperscalers [9]. A notable example is the Stargate Project - a joint venture between SoftBank, OpenAI, and Oracle - which plans to invest $500 billion in U.S. AI infrastructure, including eight data centers in Abilene, Texas [4]. This surge in demand allows infrastructure operators to secure premium pricing and long-term contracts.

Beyond hyperscalers, power providers are also reshaping the infrastructure landscape.

Power Companies: The Foundation of AI Infrastructure

Power utilities are shifting from unpredictable demand models to stable revenue streams through long-term Power Purchase Agreements (PPAs) and upfront payments from hyperscalers [10]. By 2028, U.S. data centers are expected to consume between 6.7% and 12% of the country's electricity, up from 4.4% in 2023 [3]. Electricity demand for data centers is projected to grow from 415 TWh in 2024 to 945 TWh by 2030 [3].

To meet this demand, 47 major utilities across the U.S. are planning $1.1 trillion in capital investments through 2029 to upgrade electrical grids [3]. Tech companies are also investing heavily in nuclear energy, committing over $10 billion to partnerships involving Small Modular Reactors (SMRs). Nuclear power is uniquely suited for AI infrastructure because it provides the carbon-free, 99.999% uptime baseload power that hyperscalers require [3]. For instance, Brookfield and Bloom Energy announced a $5 billion strategic partnership on October 12, 2025, to address power constraints [3].

| Utility Category | Investment Focus | 2025-2029 Capex |

|---|---|---|

| Grid Operators | Transmission upgrades, interconnection capacity | $1.1 trillion (47 major utilities) [3] |

| Nuclear Partnerships | SMRs, baseload power for hyperscalers | $10+ billion (tech giants) [3] |

| Strategic Partnerships | Distributed generation, fuel cells | $5 billion (Brookfield-Bloom) [3] |

Wholesale electricity costs near data centers have surged by as much as 267% over the past five years [3]. With long-term contracts in place, utilities are becoming indispensable partners in the AI boom. These investments ensure that infrastructure providers can lock in stable, high-margin revenue streams, forming the backbone of the AI ecosystem.

"If you're bullish on AI adoption, you have to be bullish on power and utilities" - BlackRock [3]

The real engine of AI isn’t just GPUs or servers - it’s the power that keeps everything running.

Technologies Capturing the Largest Share of Investment

As AI infrastructure spending continues to surge, certain technology sectors are drawing the bulk of this investment. The focus is on critical components that form the backbone of AI advancements.

Chipmakers and Computing Hardware

Advanced GPUs are at the heart of AI infrastructure spending, making up 40% to 50% of total data center capital expenditures [11][12]. Unsurprisingly, NVIDIA leads the pack, with its data center revenue projected to skyrocket from $15 billion in fiscal year 2023 to around $115 billion in fiscal year 2025 [11]. Their H100, H200, and Blackwell (B200) GPUs are essential for handling AI training and inference workloads in hyperscale facilities.

NVIDIA's dominance is evident in its pricing. The H100 GPU is priced between $25,000 and $30,000, while the newer B200 Blackwell GPU ranges from $30,000 to over $40,000 per unit [11]. For full systems, like the GB200 NVL72 rack - featuring 72 B200 GPUs and 36 Grace CPUs - the cost exceeds $3 million [11]. Hyperscalers are expected to allocate about 75% of their 2026 AI infrastructure budgets, roughly $450 billion, to such systems [12].

Meanwhile, hyperscalers are also exploring custom silicon to reduce reliance on NVIDIA. Examples include Google’s TPUs, AWS Trainium and Inferentia, and Microsoft’s Maia 100. Supporting technologies like HBM3e memory and advanced packaging (e.g., TSMC's CoWoS) are also in high demand but face significant supply constraints. AMD, with its MI300X GPU priced between $10,000 and $15,000, offers a more affordable alternative but still lags behind NVIDIA in market share [11].

High Bandwidth Memory (HBM3e) is another crucial element, especially for handling the immense bandwidth requirements of large model inference. For instance, Blackwell GPUs need 192 GB of HBM3e [11]. SK Hynix, which controls about 50% of the HBM market, is struggling to meet demand. Similarly, TSMC’s CoWoS packaging technology is facing a supply-demand gap of 2× to 3× through 2025 [11].

Beyond raw computing power, the supporting infrastructure for networking, storage, and cooling is equally vital.

Networking, Storage, and Cooling Systems

While GPUs drive the core computing capabilities, the surrounding infrastructure plays an equally critical role - and comes with hefty costs. High-performance networking is essential for synchronizing data across thousands of GPUs in training clusters. The industry is rapidly adopting 400G and 800G interconnects. NVIDIA’s InfiniBand remains the preferred choice for low-latency training workloads, but Broadcom’s Spectrum-X Ethernet platform is gaining traction due to its compatibility with existing systems [11]. Networking equipment alone is expected to account for $72 billion of the $716 billion in hyperscaler capex by 2026 [9].

Cooling systems are becoming a significant expense as power densities soar. Traditional air cooling, which worked for racks consuming about 5 kW, is no longer sufficient for modern AI racks like NVIDIA’s Blackwell GB200 systems, which draw around 120 kW per rack - 25 times the power of a typical 2015 hyperscale rack [11]. This shift has accelerated the adoption of Direct Liquid Cooling (DLC) and immersion cooling technologies, which now represent 15% to 20% of total data center construction costs [11][12].

"The constraint isn't silicon. It's watts." – Tech Economics [12]

Electrical infrastructure, including transformers, switchgear, busbar systems, and copper wiring, adds another layer of expense. With demand surging, lead times for transformers have stretched to 3–4 years [12].

| Component | Key Players | Estimated Gross Margin | Role in AI Stack |

|---|---|---|---|

| Advanced GPUs | NVIDIA, AMD | 70–73% | Primary compute engine for training/inference |

| HBM Memory | SK Hynix, Samsung | 45–50% | High-speed memory for large model weights |

| Networking | NVIDIA, Broadcom | 60–65% | Interconnects for GPU clusters (e.g., 800G) |

| Cooling/Power | Vertiv, Eaton, Schneider | 30–40% | Thermal management and electrical distribution |

These investments highlight the importance of not just deploying cutting-edge computing chips but also building and maintaining the infrastructure that supports them. The companies providing these technologies are more than just suppliers - they are the gatekeepers of the AI revolution.

How SaaS and AI Companies Can Align with This Shift

SaaS and AI companies need to go beyond simply using infrastructure - they must take an active role in optimizing it to stay competitive.

Implementing ROC Discipline to Optimize Infrastructure Investments

Adopting KPMG's "Return-on-Compute" (ROC) framework can guide smarter infrastructure decisions. This method helps determine if an investment will generate positive cash flow. The formula is straightforward:

(Price per token – Variable cost per token) × Tokens served × Utilization – (CapEx + Fixed OpEx) [13].

By applying this discipline, companies can ensure that every dollar spent on compute delivers measurable returns.

"Infrastructure owners that embed a 'return-on-compute' discipline - optimizing each swim-lane before committing the next dollar - should translate today's spend into tomorrow's cash-flows." – KPMG [13]

Prioritize inference over training. While hyperscalers are pouring billions into large-scale training clusters, token prices are dropping fast. For instance, GPT-4 Turbo rates fell from $30 to $5–7 per million tokens in just one year [13]. This opens the door to focusing on fine-tuning and scaling inference rather than investing heavily in pre-training. A great example is DeepSeek, which in January 2025 achieved frontier-level reasoning accuracy on a cluster costing just one-tenth of GPT-4’s budget by using reinforcement learning with human feedback [11][13].

Take advantage of open-source models. Tools like DeepSeek-R1 and LLaMA allow companies to reduce costs by moving away from expensive proprietary APIs. This shift enables businesses to focus on applications, proprietary data, and distribution channels rather than relying on closed systems [13].

By laying a solid foundation with ROC and compute efficiency, companies can then focus on building long-term infrastructure advantages.

Building Long-Term Value in AI Ecosystems

As AI infrastructure investments grow, aligning compute and power strategies is essential for staying ahead.

Secure compute capacity early. With colocation vacancy rates in North America at just 2.3% [1], waiting too long to secure infrastructure could leave you without options. Multi-year agreements with cloud providers or equity-for-compute deals can guarantee access. For example, in September 2025, NVIDIA invested $100 billion in OpenAI - paid entirely in GPUs for data center projects [4]. Similarly, Oracle signed a $30 billion cloud services deal with OpenAI in June 2025, surpassing its total cloud revenue from the previous fiscal year [4].

Address power and memory bandwidth constraints. As AI models become more reasoning-intensive, infrastructure must support High Bandwidth Memory (HBM) and liquid cooling. For instance, a single Blackwell GB200 NVL72 rack consumes 120 kW - 25 times the power density of a 2015-era rack [11]. Securing consistent power, such as through nuclear or Small Modular Reactor (SMR) agreements, can provide a competitive edge. Microsoft exemplifies this with its 20-year power purchase agreement with Constellation Energy to restart a reactor at Three Mile Island for its AI data centers [1].

Embrace agentic workflows for efficiency. Instead of relying on massive, monolithic models, develop systems where specialized agents work together via APIs. This approach enhances problem-solving efficiency and aligns with the growing trend toward communication- and memory-heavy architectures [13]. Companies that build these smart, collaborative systems will be well-positioned to capitalize on shared infrastructure investments.

These strategies not only reduce operating costs but also position companies to thrive in an industry where controlling the foundational infrastructure creates lasting value.

| Strategy | Key Action | Expected Outcome |

|---|---|---|

| ROC Discipline | Assess compute investments using the ROC formula | Generate positive cash flows from infrastructure spend |

| Inference Focus | Shift focus to post-training and inference scaling | Achieve up to 10× cost savings without sacrificing quality |

| Open-Source Leverage | Use models like DeepSeek-R1 and LLaMA | Lower API costs; focus shifts to data and distribution |

| Strategic Partnerships | Secure multi-year offtake or equity-for-compute deals | Guaranteed access to scarce compute resources |

| Power Optimization | Lock in nuclear or SMR power agreements | Ensure uninterrupted power for critical workloads |

Conclusion

By 2030, investments in AI infrastructure are expected to soar between $5 trillion and $8 trillion, far outpacing spending on AI models. To put this into perspective, infrastructure spending in 2026 alone is projected to hit $1.37 trillion, compared to just $26 billion for AI models - a staggering ratio of over 50 to 1 [6]. The companies dominating the physical layer - chips, data centers, power systems, and networking - stand to gain the most in this transformative era.

"We are at a tipping point in business and society where AI will revolutionize how we work, live and interact at scale" - Mohamed Kande, Global Advisory Leader at PwC [14]

Here’s the reality: without cutting-edge chips, reliable power systems, and high-capacity data centers, even the most advanced AI models won’t deliver [5].

For SaaS and AI companies, staying ahead means aligning with infrastructure trends. This includes adopting a Return-on-Compute mindset, securing long-term access to compute resources, and addressing power limitations. The era of zero marginal cost software is over - AI inference now requires enormous compute power and energy [15]. These strategic moves will separate the leaders from the rest.

The real winners? Not just the model creators, but the infrastructure players: hyperscalers pouring billions into data centers, utilities ensuring steady power supply, and operators managing scarce grid capacity. With colocation facilities nearing full capacity [1], the race to secure infrastructure access is heating up.

The shift is already happening. Companies that view infrastructure as the backbone of competitive advantage - and act decisively - will be primed to succeed in an AI-driven economy that could reach $830 billion by 2030 [5].

FAQs

What is included in “AI infrastructure” in these forecasts?

AI infrastructure consists of the physical and digital systems that make AI operations possible. This includes data centers, which house the hardware; power distribution and cooling systems, which ensure smooth and efficient operation; and semiconductor manufacturing infrastructure, which produces the chips powering AI models. Additionally, robust energy systems are critical to support the immense computational demands of AI at scale. Together, these components create the foundation for running AI workloads effectively.

Why do “pipes” companies profit more than model builders?

Companies referred to as “pipes” make higher profits because they own the infrastructure - such as data centers, networking, and power systems - that AI development and deployment rely on. By controlling these critical components, they can secure the lion’s share of the value generated from investments in AI infrastructure. Their services are indispensable for scaling and running AI technologies effectively.

How can a SaaS company secure enough compute and power through 2030?

To keep up with the growing demands of compute and power through 2030, SaaS companies should consider teaming up with or investing in infrastructure providers that handle AI's core systems. With global spending on AI infrastructure projected to hit trillions, working closely with hyperscalers, cloud providers, or securing dedicated resources becomes a key move. Staying updated on major developments and initiatives from industry giants like Meta, Microsoft, and Google can help businesses prepare for future resource requirements.

Related Blog Posts

- From Hype to High-Value Exits: AI's Role in Private Equity's Future

- The OpenAI Ecosystem: Why Every Dollar Sam Altman Raises Spins the AI Economy Faster

- The Hidden Network Effect Between OpenAI, Microsoft, Nvidia, and Energy Companies

- 8. $4.9 Trillion in M&A in 2025. A Record. 25% of Every Deal Over $5 Billion Had an AI Theme. This Is Not a Cycle. This Is a Structural Shift in Who Controls Valuable Assets. CNBC