Why does Reddit dominate AI citations while your blog gets ignored? The answer lies in how AI systems evaluate credibility, engagement, and relevance. Here's the breakdown:

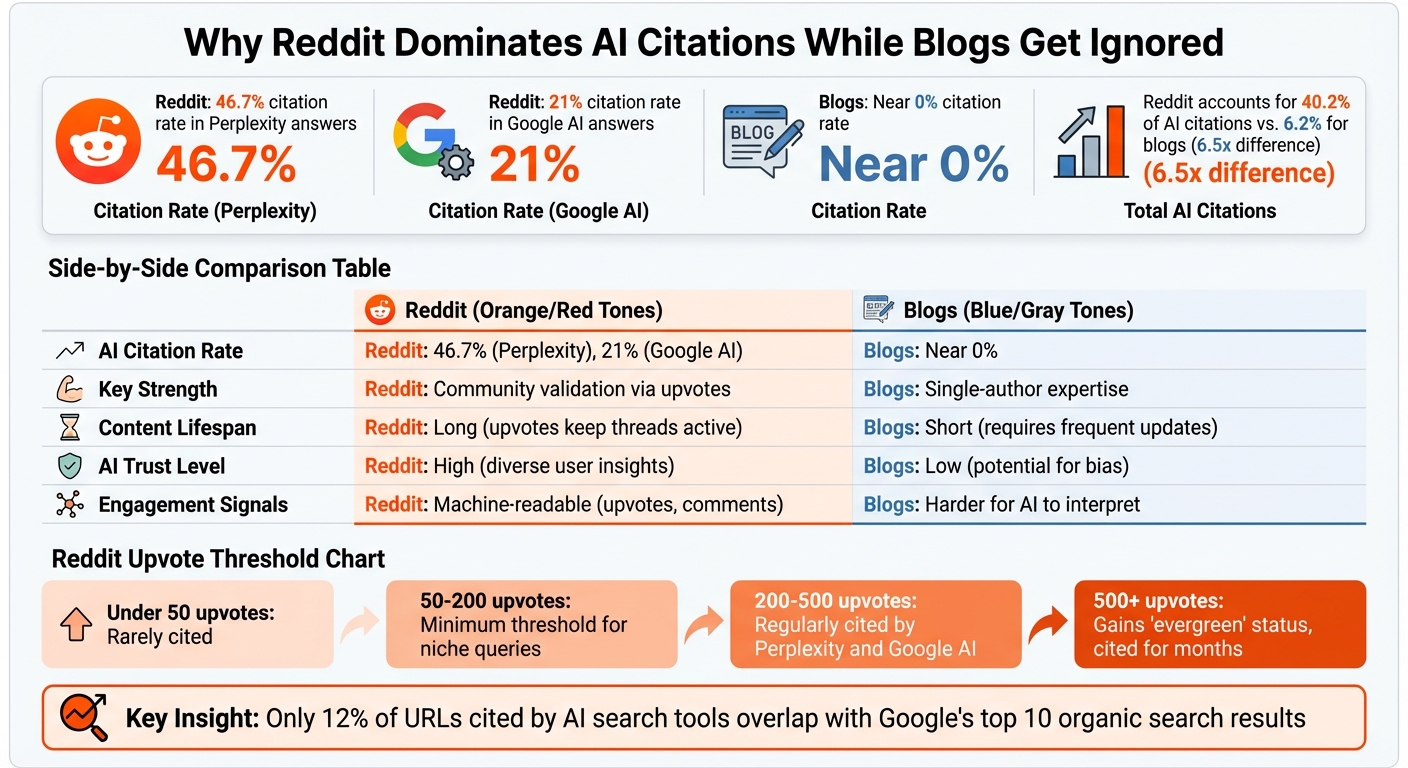

- Reddit's dominance: Reddit appears in 46.7% of Perplexity answers and 21% of Google AI responses, thanks to its community-driven content and upvote system.

- AI preferences: AI trusts Reddit's collective validation (upvotes, comments) over single-author blogs, which are often flagged as biased or outdated.

- Engagement metrics: Reddit's real-time activity and diverse perspectives make it a go-to source for AI, while blogs lack the same dynamic signals.

- Content decay: Blogs lose visibility quickly without updates, while Reddit threads can stay relevant for years through ongoing activity.

Quick Comparison

| Factor | Blogs | |

|---|---|---|

| AI Citation Rate | 46.7% (Perplexity), 21% (Google AI) | Near 0% |

| Key Strength | Community validation via upvotes | Single-author expertise |

| Content Lifespan | Long (upvotes keep threads active) | Short (requires frequent updates) |

| AI Trust Level | High (diverse user insights) | Low (potential for bias) |

| Engagement Signals | Machine-readable (upvotes, comments) | Harder for AI to interpret |

What can you do? Structure your blog for AI, update content frequently, and leverage platforms like Reddit to build backlinks. Without these changes, the gap will only grow.

Reddit vs Blogs: AI Citation Rates and Engagement Comparison

The Numbers: Reddit's Citation Advantage Over Blogs

Reddit Engagement vs. Blog Engagement

The numbers tell a compelling story: Reddit outpaces traditional blogs when it comes to AI citations. In a study analyzing 100,000 AI-generated responses, Reddit accounted for 40.2% of citations, compared to just 6.2% for blogs - a difference of 6.5 times[9].

This gap can be attributed to how AI systems evaluate engagement. Reddit's upvote system acts as a clear, machine-readable indicator of quality, easily accessible through APIs. In fact, Google reportedly spends $60 million annually for real-time access to Reddit's engagement metrics[1]. On the other hand, blog metrics like pageviews and bounce rates don’t register as reliable trust signals for AI systems.

Interestingly, Reddit posts don’t need to be massively popular to gain AI recognition. Even posts with fewer than 20 upvotes frequently outperform blogs because AI values topical relevance and community-driven validation over raw popularity[2]. For example, a Reddit thread with just 200 upvotes consistently qualifies for AI citations, while a blog post with 10,000 pageviews might remain invisible to AI tools[1].

| Upvote Count | AI Citation Eligibility |

|---|---|

| Under 50 | Rarely cited |

| 50–200 | Minimum threshold for niche queries |

| 200–500 | Regularly cited by tools like Perplexity and Google AI |

| 500+ | Gains "evergreen" status, cited across platforms for months |

This advantage is amplified by Reddit's community-driven dynamics, which AI systems trust more than single-author content.

Community Validation vs. Single-Author Content

AI systems view Reddit threads as collective filters of consensus rather than individual opinions. When multiple users in a discussion recommend the same solution, AI interprets this as verified, crowd-sourced expertise[7]. By contrast, a single blog post - even one written by a recognized expert - provides only one perspective. If the author has a commercial interest, AI may flag the content as biased[7].

"AI trusts the crowd more than the expert when the expert has something to sell. This shift is not reversible."

– Wenstein Research[7]

Reddit threads typically feature contributions from 15 or more users, often diving into granular details like hidden fees, customer support experiences, or integration challenges[7]. This diversity of viewpoints provides data points that AI systems consider trustworthy, unlike the limited perspective of a single-author blog. This trend underscores a broader shift in how AI evaluates content, with community-driven insights consistently outperforming traditional blog formats.

AI's preference for Reddit isn't just about engagement; it's also about how AI tools select sources.

How Perplexity and Google AI Select Sources

Reddit dominates AI citations, appearing in 46.7% of Perplexity responses, 21% of Google AI answers, and even 11.3% of ChatGPT outputs, despite ChatGPT's preference for other sources[1][4].

AI tools prioritize content that is fresh, relevant, and engaging. Reddit posts are often indexed within minutes, while blogs can take days to appear in search results[1]. Over half of the Reddit content cited by AI comes from Q&A-style threads, which are easy for AI to extract and analyze[2].

Interestingly, only 12% of URLs cited by AI search tools overlap with Google's top 10 organic search results[3]. This disconnect between traditional SEO rankings and AI visibility highlights a key insight: even a high-ranking blog can go unnoticed if it lacks the engagement signals and structural format that AI systems prioritize.

sbb-itb-9cd970b

The Math Behind the Growing Gap

Reddit's 10x Engagement Multiplier Effect

Reddit's edge in citations comes from its ability to generate compounded engagement signals that AI systems can measure and rely on. For example, Perplexity uses an L3 XGBoost quality gate, which incorporates Reddit's upvote system as a machine-readable indicator of quality [10][11]. These upvote counts act as a crucial metric for AI evaluation, ensuring Reddit content remains relevant for long-term citations. When paired with active comment threads, this combination significantly boosts Reddit’s appeal as a citation source.

Additionally, the Query Fan-Out method plays a role by breaking down queries into multiple sub-queries, increasing the likelihood that Reddit's diverse discussions align with what AI systems are looking for [5].

Content Decay and AI Indexing

While Reddit benefits from its engagement mechanisms, blog content often struggles with a phenomenon called content decay. Without consistent updates, blog visibility drops sharply over time. Research shows that citation rates for blogs fall by about 40% between 30 and 90 days, and by 65% after 90 days [11]. AI platforms like Perplexity factor in a time decay parameter, which reduces the visibility of older content unless it’s refreshed regularly [10][11]. In fact, content updated within 30 days sees 3.2 times more citations compared to older material [5].

"The Freshness Floor is higher for AI. You can coast on SEO results for months or years. You cannot coast on AI."

– u/MathematicianBanda [11]

Interestingly, even though some Reddit posts are quite old (averaging around 900 days), the platform's design allows for continuous activity. New comments and discussions can effectively "reset" a post's freshness signal, keeping it relevant for AI indexing [2][10][13]. Blogs, on the other hand, are typically static unless updated manually, making them more prone to fading into obscurity. This dynamic explains the widening gap in citations between Reddit and blogs.

| Content Age | Citation Impact | What Happens |

|---|---|---|

| 0–7 days | Peak citation eligibility | AI systems prioritize heavily |

| 30–90 days | ~40% citation rate drop | Visibility begins fading |

| 90+ days | ~65% citation rate drop | Effectively invisible without updates |

The Feedback Loop: How High Engagement Drives More Citations

Reddit threads with high engagement - specifically those reaching 500+ upvotes - achieve what’s known as "evergreen citation status." These threads continue to be cited by AI systems for months or even years [1]. This visibility creates a feedback loop: higher citations lead to more visibility, which attracts additional upvotes and comments, further reinforcing the thread's prominence. Between March and June 2025, Reddit citations in AI-generated summaries jumped by an impressive 450% [12].

In contrast, blogs lack the mechanisms to generate ongoing engagement signals. Once a blog post is published, it often remains static, unable to accumulate real-time upvotes or comments. This limits its ability to maintain relevance in the eyes of AI algorithms.

"Reddit threads are no longer just social media posts. They are structured data inputs into the most widely used AI systems in the world, with upvote counts serving as machine-readable quality scores."

– Sam Wilson, Author, Upvote.net [1]

Early engagement plays a critical role in determining long-term citation potential. For Reddit, clicks and interactions in the first few minutes or hours often set the stage for a thread's success [10][11]. This initial burst of activity gives Reddit a distinct advantage, while blogs often struggle to generate the same momentum.

Is Reddit Dead for SEO & ChatGPT? The Truth About AI Citations

How to Increase Your Blog's AI Citation Rate

Want your blog to compete with platforms like Reddit for AI citations? Here's how you can optimize your content to stand out.

Structure Your Blog Content for AI Systems

AI doesn't "read" your content like a person - it extracts key information. The first 30% of your page is crucial, as 44.2% of all citations come from this section alone [14][5]. Start each section with a concise, 40–60 word answer right under the header. This gives AI a clear and digestible snippet to work with.

Use question-based headers (H2 or H3) instead of statements. These are 3.4x more likely to be extracted by AI systems [14]. For example, instead of "Benefits of Email Marketing", go with "What Are the Benefits of Email Marketing?" This aligns with how users phrase their queries.

When comparing features or pricing, opt for tables instead of paragraphs. Tables have an 81% extraction rate, compared to just 23% for prose [14]. Also, place statistics directly within the text alongside inline citations. AI systems process this format more effectively than footnotes.

To further improve your blog's visibility, implement structured data like FAQPage, HowTo, and Article schema. This can boost citation rates by 78% [14]. These schema types signal to AI crawlers that your content is well-organized and authoritative.

Use High-Traffic Platforms to Build Backlinks

Even with optimized blog structure, external validation is key. AI systems favor independent sources like Reddit, where 82% of citations come from earned media rather than owned content [7].

A smart approach is to engage on Reddit first, then link back to your blog. Answer questions in popular threads such as "What's the best X for Y?" or "Alternatives to X" within subreddits that have at least 50,000 subscribers. These threads are often repurposed in AI-generated answers [17].

Structure your Reddit responses for maximum impact: start with a one-line verdict, include a three-step method, highlight two common pitfalls, and provide one proof point. This format is highly extractable for AI systems.

Timing is critical. The first 60–90 minutes after posting determine whether your comment gains traction. Aim for 500+ upvotes, which is the threshold for "evergreen citation status" [1].

"AI trusts the crowd more than the expert when the expert has something to sell." – Carlo Alberto Cuman, Founder and President, Wenstein [8]

Update Content Regularly to Signal Freshness

AI systems prioritize current information. Content updated within the last 30 days is 3.2x more likely to be cited for time-sensitive queries than older material [19]. Unlike traditional SEO, where content can remain static, AI algorithms expect regular updates. In fact, freshness accounts for 40% of Perplexity's ranking factors, and 76.4% of ChatGPT's top-cited pages were updated within the last 30 days [16][18].

It's not enough to update the "last modified" date. AI models compare your content to past versions, so you need meaningful changes - like adding new statistics, revising claims, or including current-year references. For instance, aim to replace 3–5 statistics per 1,000 words and use phrases like "As of January 2026..." to embed temporal context [18][19].

Regular updates can dramatically improve citation rates. One SEO consultant refreshed over 200 pages by adding updated statistics, revising dates, and enhancing author credentials. This led to a 292% increase in AI citation rates, with some pages reaching an 83% citation rate [18]. To stay competitive, set a schedule:

- Product Pages: Update monthly with fresh stats, pricing, and specs.

- Data-Heavy Guides: Refresh quarterly by replacing outdated stats and adding recent studies.

- Blog Posts: Update quarterly to annually, focusing on stats, introductions, and FAQ sections.

| Content Type | Refresh Frequency | Actions |

|---|---|---|

| Product Pages | Monthly | Update stats, pricing, specs, and internal links [18] |

| Data-Heavy Guides | Quarterly | Replace 3–5 stats per 1,000 words; add new studies [18] |

| Blog Posts | Quarterly to Annually | Rewrite introductions, update stats, and add FAQ blocks [18] |

Don't overlook technical factors. Use Schema.org dateModified tags only for real updates, and ensure AI crawlers like PerplexityBot, GPTBot, and OAI-Searchbot have access in your robots.txt file. Surprisingly, 73% of websites block AI crawlers through technical barriers [6][15]. Removing these blocks can instantly improve your visibility.

Conclusion: Narrowing the Gap with the Right Approach

The analysis above highlights a clear path for blogs to challenge Reddit's dominance in AI citations. Reddit's 46.7% citation rate in Perplexity and 21% in Google AI Overviews isn't accidental - it benefits from a 10x engagement multiplier, real-time indexing, and community-driven validation signals that AI systems can easily interpret [4][7][12]. Blogs, by contrast, often lack these dynamic engagement indicators. However, with focused strategies, this gap can shrink.

As traditional SEO loses relevance - only 12% of AI-cited URLs appear in Google's top 10 - a shift toward "Machine Relations" emerges. This approach prioritizes dynamic engagement signals over static rankings, creating a new opportunity for blogs [4]. By refining technical elements like structure, ensuring crawler accessibility (a problem for 73% of websites), and updating content every 30 days, blogs can significantly improve their AI citation rates [6][5]. Incorporating original research alone boosts citation chances by 55% to 120% [4].

The key lies in blending technical optimization with earned media strategies.

"Authority is becoming distributed. One authoritative source no longer determines truth. Consensus across multiple independent voices does. AI trusts the crowd more than the expert when the expert has something to sell." – Carlo Alberto Cuman, Founder, Wenstein [8]

A winning strategy combines owned and earned media. Engaging authentically in high-traffic subreddits, offering value first, and linking back to blogs as a resource can be highly effective. This "inverted distribution" approach aligns with the fact that 82% of AI citations come from earned media, not owned content [7][20]. For example, one SEO consultant who implemented these tactics - updating stats, adding schema markup, and actively participating on Reddit - achieved a 292% increase in AI citation rates [18].

Blogs that fail to adapt risk falling further behind. But for those ready to treat AI crawlers as a unique audience, build distributed authority, and keep content fresh, the opportunity to secure AI citations is both measurable and vital in this evolving content landscape.

FAQs

How can I tell if AI tools can crawl my site?

To ensure AI tools can access your site, start by confirming that your website is open to their crawlers. Check your robots.txt file to make sure it isn’t blocking search bots, and examine your server logs to spot visits from crawlers. Structure your content clearly, using well-organized metadata to make it easier to navigate. Avoid obstacles like login requirements or CAPTCHAs that might block access. Keep an eye on your site's accessibility regularly and stick to SEO best practices to boost your chances of being discovered and cited.

What blog changes actually increase AI citations?

To increase the likelihood of your blog being cited by AI systems, concentrate on a few key strategies. First, make sure to include clear and accurate source citations throughout your content. This helps establish credibility and makes it easier for AI systems to recognize your blog as a reliable reference. Next, create content that is thorough and highly relevant to your audience. AI prioritizes structured and informative material, so aim to cover topics in depth while staying focused.

Another key factor is technical accessibility. Use structured data to ensure your blog is easy for AI to analyze and understand. Additionally, participating in high-authority online communities, such as Reddit, can boost your visibility. When contributing, focus on providing detailed, well-researched, and entity-rich responses that align with the discussion.

Lastly, prioritize clarity, depth, and semantic alignment in your writing. AI systems tend to favor content that is well-organized, informative, and avoids overtly promotional language. By following these steps, you can improve your blog's chances of being recognized and cited by AI systems.

How often should I update posts to avoid content decay?

To keep your content visible and relevant in AI citation systems, it's essential to have a consistent update schedule. Aim to refresh high-value pages every 3–6 months and update blog posts on a quarterly basis. For broader or less time-sensitive content, an annual review and update should suffice.

Why does this matter? Regular updates send a clear signal to AI-driven search engines that your content is fresh and authoritative. These systems tend to prioritize recent, well-maintained material, so sticking to a systematic refresh plan can help ensure your content stays relevant and doesn't get overlooked.